When AI Agents Scrum: Why Product Goal, Sprint Goal, and Definition of Done Matter More Than Ever

Imagine a Scrum team where nobody calls in sick, nobody loses focus during afternoon stand-ups, and nobody needs two weeks to onboard onto a codebase. A team that codes, reviews, tests, and deploys around the clock, adapting in real time. This is not science fiction anymore. AI agent frameworks are making multi-agent software delivery teams a serious conversation in engineering leadership circles.

But before CTOs start sketching org charts populated entirely by agents, there is a hard-won truth from twenty-five years of agile practice that deserves attention:

The bottleneck in product delivery has rarely been the speed of human hands. It has been the clarity of intent.

Speed without direction is not productivity; it is drift, and at a very large scale.

We believe that the three commitments defined in the 2020 Scrum Guide: the Product Goal, the Sprint Goal, and the Definition of Done, will become, if anything, more important in a world of AI-augmented delivery.

Commitments: Transparency, Focus, and a Measure of Progress

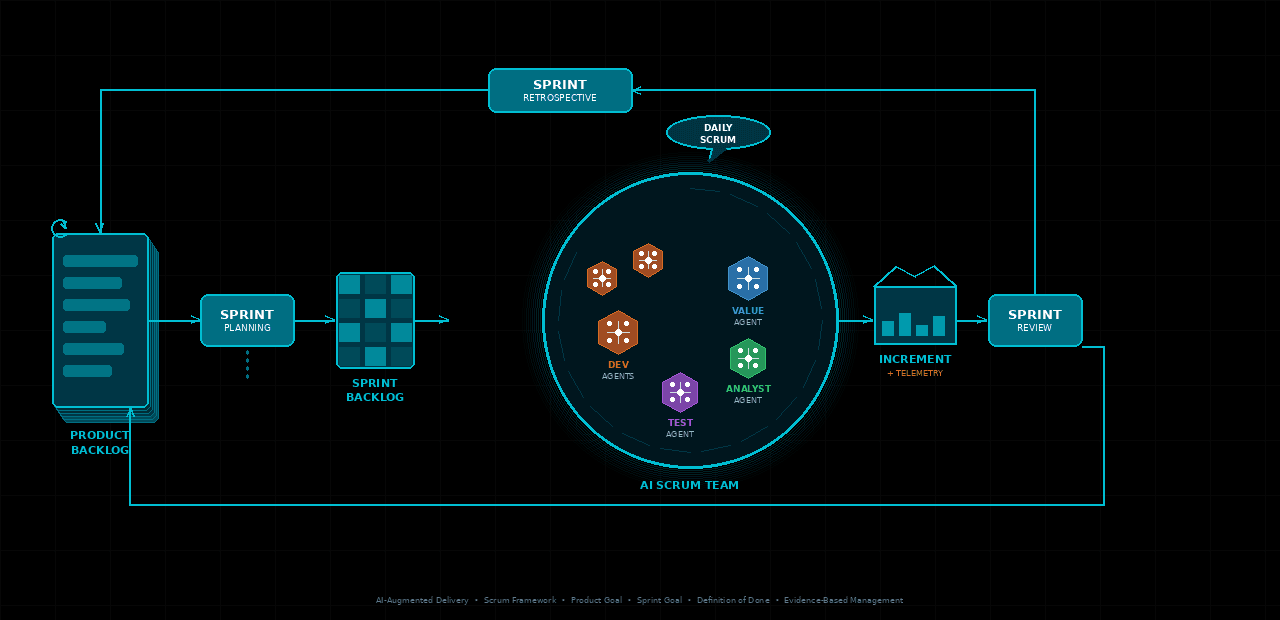

Each of the artefacts in the Scrum Framework contains a commitment. The Product Backlog has the Product Goal, the Sprint Backlog has the Sprint Goal, and the Increment has the Definition of Done.

These aren’t administrative checkboxes. They are transparency mechanisms. Without them, teams inspect and adapt based on incomplete or misaligned information. With these commitments, every decision: what to build, what to skip, when to stop, has a reference point.

For human teams, the commitments help compensate for the natural entropy of collaboration: differing priorities, context-switching, and communication gaps. For AI agent teams, the challenge is different but equally real. Agents don’t drift from goals out of distraction. They drift because goals were never made sufficiently explicit.

An AI Agent Scrum Team in Practice

Consider a fictional product team at a B2B SaaS company, call it Veloxa. The engineering organisation has deployed a team of AI agents to build a new real-time analytics dashboard. The team consists of a Value Maximisation Agent acting as Product Owner, an Analyst Agent responsible for backlog refinement, a set of Cross-Functional Developer Agents, a Test Agent team, and a Deployment Agent.

Without structure, here is what happens: each agent optimises locally. The Developer Agents ship features. The Test Agents validate against a spec. The Deployment Agent pushes builds. The system hums. But three weeks in, the leadership team looks at the product and asks: is this actually solving the problem our customers have? No agent can answer that question. Nobody set a Product Goal.

Now apply the Scrum commitments, adapted for the pace at which AI agents can work.

Product Goal (Weekly)

At the start of each week, the Value Maximisation Agent is given an explicit Product Goal aligned to a business outcome: “Enable enterprise customers to drill down to user-level retention data within three clicks, reducing support ticket volume by 20%.” This becomes the strategic lens through which the entire Product Backlog is ordered and refined. Every item that doesn’t serve this goal is deprioritised. The agents now have a why. From the Product Goal, the Value Maximisation Agent works with the Analyst Agent to create a set of focused objectives, which in turn shape the Sprint Goal.

Sprint Goal (Daily)

AI agents are fast. They are capable of compressing a two-week sprint into twenty-four hours of continuous, focused work, so the Sprint Goal becomes a daily commitment: “By the end of the day, deliver a working filter component that allows segment-level drill-down, integrated with live data.” This creates coherence. The Developer Agents are not building separate features in parallel isolation; they are collaborating toward a single outcome. The Sprint Goal, as per the Scrum Guide, “creates coherence and focus, encouraging the Scrum Team to work together rather than on separate initiatives.”

Definition of Done: includes deployment to production

This is where things get genuinely exciting.

Slicing the Backlog: Where the Analyst Agent Earns Its Place

One of the most underestimated challenges in any delivery system, human or AI, is the quality of the work items themselves. Vague, oversized, or poorly understood backlog items are one of the leading causes of wasted effort and missed Sprint Goals. An AI agent team does not escape this problem; it amplifies it. A Developer Agent, given a poorly defined story, will implement confidently and incorrectly.

This is where the Analyst Agent plays a critical role. Working from the Product Goal, the Analyst Agent interrogates each backlog item before it enters a Sprint. It asks clarifying questions: What is the user’s actual intent? What edge cases haven’t been considered? Can this item be sliced into smaller, independently deliverable increments? It challenges assumptions embedded in acceptance criteria and surfaces dependencies.

Good slicing is not about making stories smaller for the sake of it. It is about reducing the surface area of uncertainty. In a human team, this work is done through conversation: backlog refinement sessions, three amigos meetings, hallway discussions. In an AI agent team, the Analyst Agent formalises and accelerates that process, producing well-structured, right-sized items that a development pipeline can act on with confidence.

Test Agents and Developer Agents: A Productive Tension

Perhaps the most compelling dynamic in an AI agent delivery team is the relationship between the Test Agents and the Developer Agents, particularly when shaped by Test-Driven Development.

In a TDD approach, the Test Agent doesn’t sit at the end of the pipeline waiting to validate finished work. It leads. It writes failing tests first, expressing the intent of the feature before a single line of implementation exists. The Developer Agent then works to make those tests pass. This reversal, test first, code second, forces precision. It is very difficult to write a meaningful test without understanding exactly what the system is supposed to do, and that clarity benefits the entire team.

But the Test Agent’s contribution doesn’t stop at writing tests. In a well-designed AI agent team, Test Agents actively challenge the design decisions made by Developer Agents. When a Developer Agent produces an implementation, the Test Agent interrogates it: Is this approach maintainable? Does this design introduce hidden coupling? Could this logic be expressed more clearly? This is not just quality assurance; it is a form of continuous code review embedded into the delivery loop itself.

The productive tension between Test Agents and Developer Agents mirrors what the best engineering teams already know: that having someone question your design choices is not an obstacle to progress, it is the mechanism by which quality compounds over time. TDD gives that tension structure. It ensures the conversation between test and code is not arbitrary but anchored to the behaviour the product is expected to exhibit.

At Veloxa, within each daily Sprint, the Test Agents write acceptance tests derived from the Analyst Agent’s refined stories. The Developer Agents implement. The Test Agents challenge. The cycle runs, and by the end of the day, the Increment is not just built; it is scrutinised.

Redefining “Done” for the Age of AI Delivery

For most human Scrum teams, the Definition of Done stops at “code reviewed, tests passing, deployed to a test environment.” Production deployments are a separate, slower-moving process, often gated by release management. AI agent teams can change this entirely.

When a team of agents can build, test, and deploy within hours, the Definition of Done can and should include deployment to production. This should not involve cutting corners or accumulating technical debt. Rather, it is about taking advantage of the technological capability now available; the feedback loop that previously took weeks can close in hours.

This is also where Evidence-Based Management offers a useful lens. The moment the analytics dashboard feature is live at Veloxa, the Deployment Agent kicks off automated instrumentation: feature adoption rates, time-on-task, error rates, and support ticket deflection are captured in real time. The Value Maximisation Agent reads these signals and compares them against the Product Goal’s target outcome.

This is the empirical loop at the heart of Scrum, accelerated. The Scrum Guide describes Scrum as founded on empiricism: transparency, inspection, and adaptation. With production telemetry wired directly into the delivery cycle, the agents can answer these questions within hours of shipping:

Did the value customers experience actually improve?

Did we close the gap between what customers have today and what they need?

If the telemetry shows the feature isn’t landing, the Value Maximisation Agent surfaces that at the next Sprint Planning cycle. The Product Goal may be refined. The next Sprint Goal is reoriented. The system self-corrects, not after a quarterly review, but overnight.

What This Means for Engineering Leaders

The most important thing CTOs should take away from the coming wave of AI-augmented delivery is this: the human work shifts from execution to intent. The agents can handle the how. They need people, or at minimum, well-governed AI systems, to keep the why sharp.

Product Goals, Sprint Goals, and Definitions of Done are not mere elements of agile theatre. Remove them from a human Scrum team, and you get a team that delivers confidently in the wrong direction. Remove them from an AI agent team, and you get the same outcome, just faster and more expensive.

The teams that will succeed with AI-augmented delivery are not those who automate fastest. They are the ones who invest in the quality of their commitments: who treat the Product Goal as a precision instrument, who hold the Sprint Goal to a single clear objective, who let the Analyst Agent sharpen every item before it reaches a Developer, and who push their Definition of Done all the way to production with telemetry wired in.

The Scrum framework was always a framework for managing complexity under uncertainty. That problem has not gone away. It has just found a much more capable team.